Building The Organisational Brain

Everyone's wondering whether they picked the right AI tool or whether their people are good enough at prompting. The real question is whether AI knows enough about your organisation to be useful regardless of which tool you pick. For almost everyone I'm speaking to, the answer is no.

ChatGPT or Claude? (Claude. Every time.) Copilot or Gemini? A chatbot or a dedicated agent? Should we train the whole team, or start with a few power users?

I've had about fifty conversations with organisations over the past few months about AI. Nearly all of them are working through some version of these questions. Which platform. Which use cases. Whether their people are skilled enough to get value out of it.

These aren't bad questions. But they're premature.

The question I'm starting to see underneath all of them is simpler and harder: does AI know enough about your organisation to be useful, regardless of which tool you pick?

For almost everyone I'm speaking to, the answer is no.

The Knowledge Gap

Off-the-shelf AI doesn't know how your organisation works. It doesn't know your customers, your processes, your product lines, or what your best people do differently from everyone else. Every AI tool — whether it's ChatGPT, Claude, a $3K/month agent platform, or a custom build — is guessing until that knowledge is structured and accessible.

For the past two decades, organisations have obsessed over data integrity — clean, structured, consistent data as the foundation for reporting, performance analysis, and decision-making. That discipline still matters. But it's no longer enough.

We're not just making decisions on our own anymore. We're using AI as a thought partner — to deepen thinking, draft strategies, prepare briefings, analyse patterns. And AI can pull information from a CRM or a dashboard. But the deeper knowledge — how your organisation actually makes decisions, what your best people know that your newest people don't, how you talk to clients, what you've learned from ten years of doing this work — that usually just sits in people's heads.

Data integrity was the discipline of the systems era. Knowledge integrity is the discipline of the AI era. And almost nobody is thinking about it yet.

The Organisational Brain

The infrastructure that solves this is what I call the organisational brain: a living knowledge layer that AI works with to make every interaction smarter.

The work is codification: making the implicit explicit — capturing how your organisation actually operates (not how you think it operates) and structuring it so AI can reason over it.

The surprising part: this isn't primarily a software project. It's a knowledge architecture project. The build is mostly markdown, not code. Structured documents, databases, decision logs, process descriptions — the kind of thing that lives in Notion or a similar tool, not in a codebase.

Why is nobody talking about this yet? Partly because we're only three or four months into the models being genuinely capable of it. With context windows expanding to a million tokens, AI can now read and understand almost everything about an organisation every time it enters a conversation. A year ago, a model would have got lost — started compressing, lost the thread, forgot what mattered. The infrastructure is only possible because the models have caught up.

I arrived at this gradually. I'd been using Claude Code for months, where evolving documentation was accessible to the model every session — it knew my business because my methodology, positioning, and client context were all right there. Replicating that in regular Claude via project files was painful: static uploads, manual re-uploads, accidentally deleting the wrong version. The shift came when MCP connectors let Claude read from and write to Notion directly. That's when the organisational brain became a living system rather than a folder of documents.

What Gets Codified

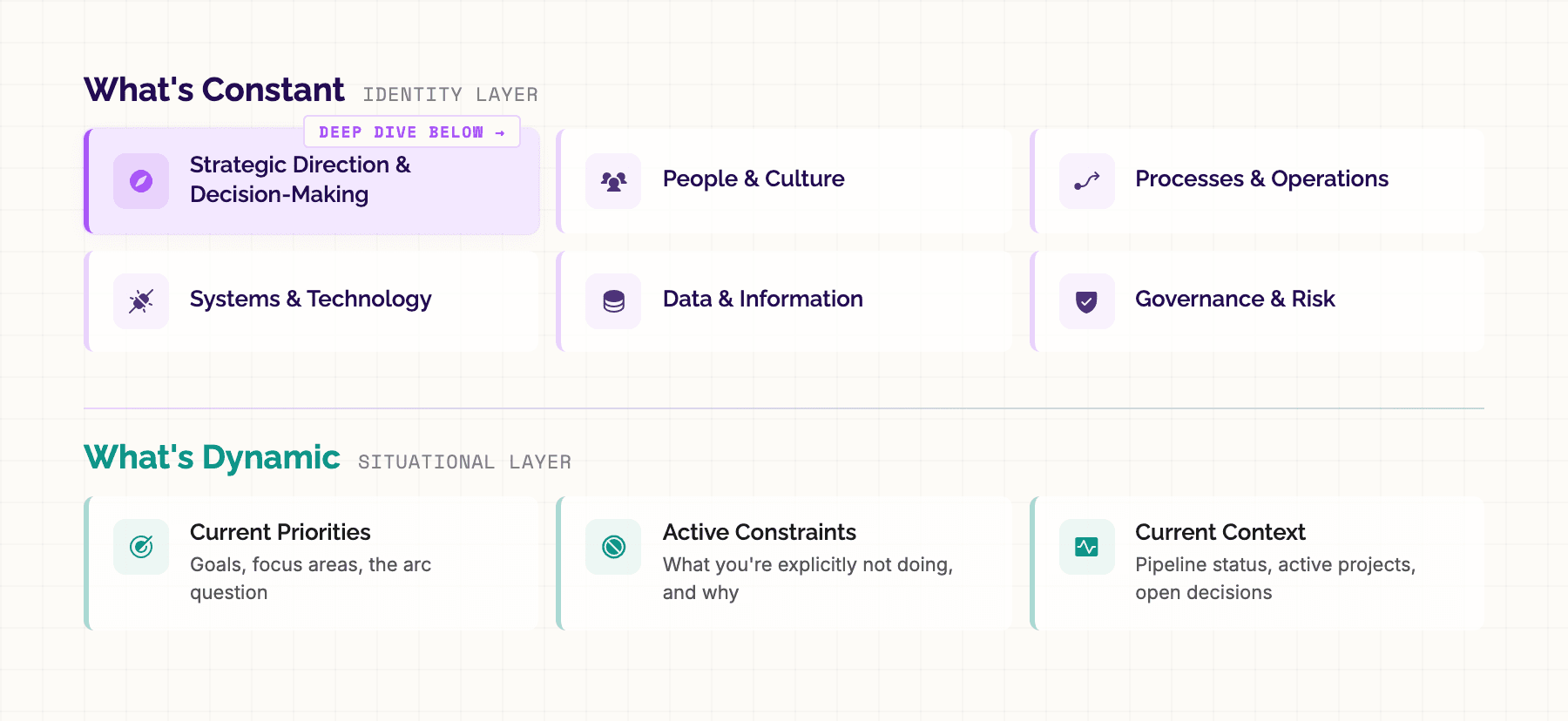

The organisational brain isn't just one thing. It covers every dimension of how your organisation operates:

Strategic direction and decision-making — where you're heading and how trade-offs get made. People and culture — what good performance looks like and how the team works. Processes and operations — how things actually flow, who your customers are, how you talk about your work. Systems and technology — what's in the stack and how ready it is. Data and information — where it flows, where it gets stuck. Governance and risk — how decisions are governed and what the boundaries are.

Each domain has knowledge that, once codified, fundamentally changes what AI can do for you. But the real insight isn't about breadth. It's about the interplay between two layers.

What's constant — your identity. Who you are, what you're building toward, how you operate. Your positioning, your values, your practice model, your strengths, and your known patterns and tendencies. This doesn't change month to month. When it's defined clearly, every interaction with AI is oriented. It knows what lens to apply, what language to use, what matters to you.

What's dynamic — your current situation. What you're focused on right now, what constraints you're operating under, and critically, why those constraints exist. This is the living, breathing layer — it changes with each quarter, each sprint, each planning cycle. It's what makes AI's advice contextually right rather than generically good.

One without the other and you get half the picture. The constant layer alone gives you a generic assistant that knows your brand guidelines but has no idea what you're wrestling with this week. The dynamic layer alone gives you a task manager that doesn't understand why the tasks matter.

Both together give you an AI that can reason about your situation — not just answer questions, but weigh trade-offs alongside you.

Let me show you what that looks like for strategic direction.

Strategic Direction in Practice

I run my business in monthly arcs. Each arc has a question it's trying to answer, a set of parallel workstreams with specific beats and definitions of done, explicit constraints, guardrails, and a risk register.

That's my wall from the start of March. It works. There's something about applying my blue painters tape to this board when something's done that no project management tool has ever replicated. I'm going to keep doing it.

The wall is for me. But I also documented the whole arc in Notion and uploaded it to Claude as a project file. From the outset, Claude had everything — the arc question, the workstreams, the constraints, and the rationale behind each one.

My March arc included:

An arc question — "Can the ecosystem I've built generate conversations, clients, and subscribers before the revenue cliff arrives?" — that anchored every decision against a single test.

An arc map with four parallel workstreams: Activate the Network (sales outreach), Go Public (LinkedIn and content), Close CG (finishing a client project), and a constraint arc — Timebox the Builder.

Explicit constraints with rationale. Side-quests frozen. Platform freeze — no new features on any of my products. And the critical one: builder impulse redirected. When the urge to build hits, redirect that energy into content about building rather than actual new builds.

That last constraint existed because I know myself. My natural gear is building. The temptation to retreat into code instead of doing outreach, sending emails, and posting on LinkedIn is real. I will find a way to justify it every time. The logic will be airtight. And I'll surface three weeks later with something impressive and no pipeline.

The constraint didn't just say "don't build." It named the pattern, explained why it was dangerous right now, and gave me an alternative path for the energy.

Because Claude had all of this, what happened next actually surprised me.

When AI Argues Back

I've written before about using AI as a thinking partner. As a solo practitioner, I've got great mentors I talk to — but I can't call someone every time I want to workshop an idea or pressure-test a decision. AI fills that gap. Someone to push against, to test ideas with, to hold me accountable.

But that only works if AI actually knows enough about my situation to push back intelligently.

Early in March, I had a genuine breakthrough about how AI tools should handle context — a real architectural insight, not just a feature idea. Three things were converging at once: it would improve my own content workflow, it would give me a proof of concept to show clients, and a potential business partner was interested in exactly this kind of product.

The case for building it now felt airtight. So I asked Claude: is this compelling enough for me to contravene Arc D?

Claude's response: "You wrote Arc D yourself. And you wrote it because you know this is exactly what happens. A genuine insight hits, it connects to three different things, the logic for building it right now becomes airtight — and suddenly you're deep in a build while your outreach emails sit unsent."

I pushed back. I'd already sent sixty outreach emails. Five meetings were booked. I'd submitted a substantial proposal. The premise behind "don't build" — that outreach would slip — had changed. The outreach machine was already running.

Claude updated: "That changes things significantly... build, but build bounded."

Then I added a fourth thing to the scope. Claude pushed back again: "We've now got four things on the table. That's a quarter's worth of work, not a sprint."

I decided not to build it. Not because Claude told me not to — because the conversation helped me see clearly that I was going off track. This was the fourth time over the prior weeks that I'd had a similar conversation. Each time, the pattern was the same: a compelling idea, an airtight case, and Claude holding a mirror up to my own priorities until I could see what I was actually doing.

Where This Goes

I'd love to give you a roadmap for how to build this. Maybe in a few months I'll be able to.

But right now, this is an evolving discipline. Every organisation's brain looks different. There's no playbook yet, and the tooling is still catching up.

What I can tell you is that the starting point matters. Starting with tools — which platform, which vendor — puts you on a path where AI stays generic and every team member is on their own. Starting with codification — making the implicit explicit, structuring what your organisation actually knows — puts you on a path where AI gets smarter about your business with every interaction.

This was strategic direction — one domain. Now think about what happens when you layer on everything else. People and culture. Processes and operations. Governance and risk. Connect Claude to your CRM, your accounting system, your project management tool. All of a sudden, AI as a superintelligence — something that actually knows your business deeply enough to reason about it — starts to become a real possibility.

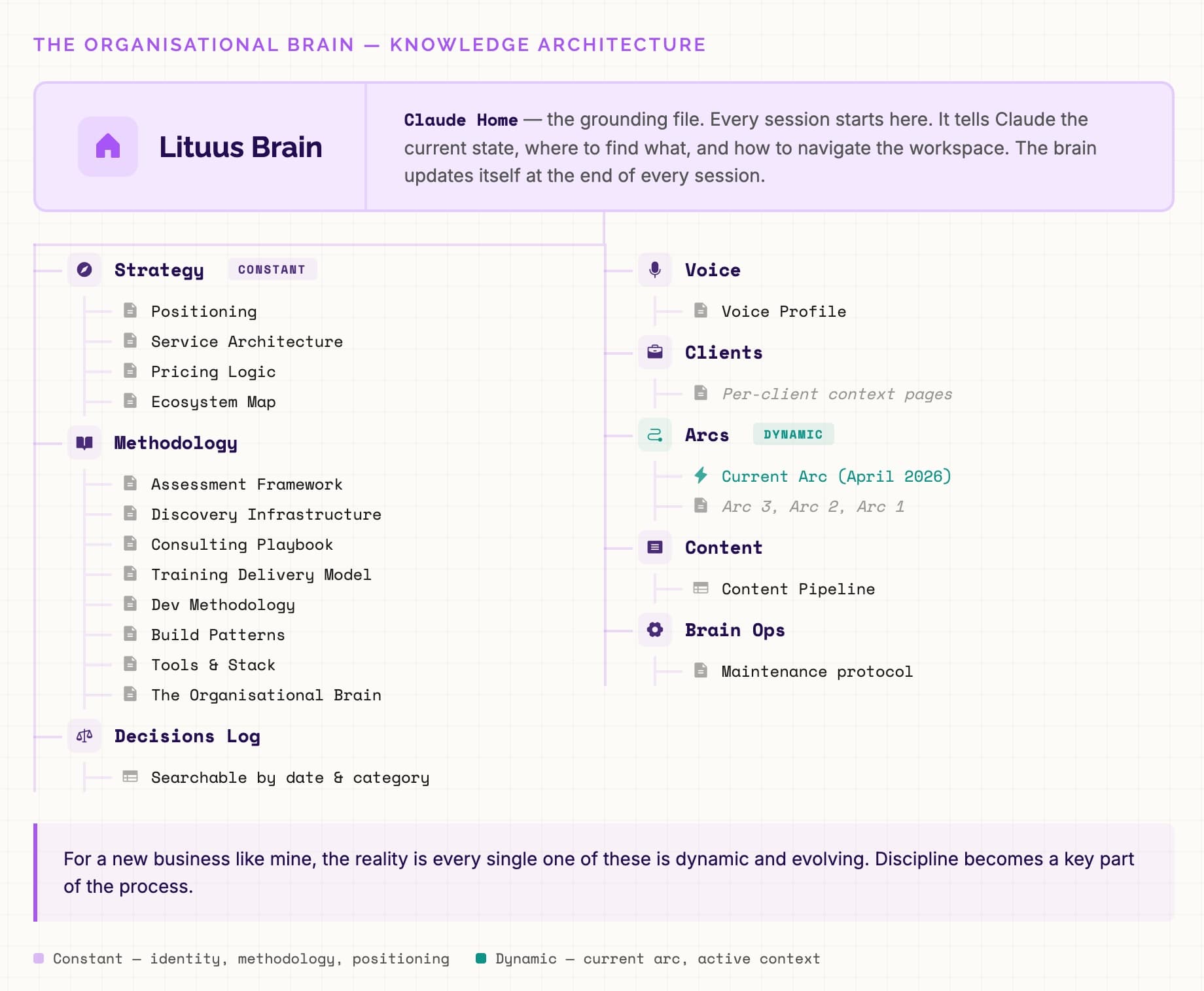

Here's what my own organisational brain looks like in Notion:

Yours will look very different. And part of the reason I've been able to build this is that codification is one of my individual superpowers. I'm good at pattern recognition — finding the consistent, repeatable structures inside messy processes and making them explicit. That's not a skill everyone has, and it doesn't need to be. What matters is that someone in your organisation starts the work: capturing how you actually operate and structuring it so AI can reason over it.

Start with what AI doesn't know about you. That's where the real leverage is.

Louis Razuki

Founder & Guide

I write about working with AI — the tools, the mindsets, the builds that actually deliver. Three years of daily AI practice distilled into experiments, insights, and honest takes on what's real and what's just hype.